Back to Articles Hub Homepage Game QA Portfolio Hub About Me QA Chronicles

Regression Testing Example: A Risk-Based Workflow for Manual QA

A practical regression testing example using a real QA workflow, not just theory.

This regression testing example shows my manual QA workflow in practice, backed by a real one-week solo pass on Sworn (PC Game Pass, Windows). I break down how I scope regression testing from change signals, apply risk-based testing, verify the golden path first, and capture evidence that makes results credible.

TL;DR: Regression Testing Example

- Workflow shown: a manual regression testing workflow using risk-based scoping → golden-path baseline → targeted probes → evidence-backed results.

- Example context: Sworn on PC Game Pass (Windows) used as a real regression testing example in game QA.

- Build context: tested on the PC Game Pass build

1.01.0.1039. - Scope driver: public SteamDB patch notes used as an external change signal for regression testing (no platform parity assumed).

- Outputs: a regression testing matrix with line-by-line outcomes, session timestamps, and bug tickets with evidence.

Regression testing scope: what I verified and why

This regression testing example is based on a self-directed manual QA regression testing pass on Sworn using the

PC Game Pass (Windows) build 1.01.0.1039, run in a one-week solo timebox.

The regression testing process was change-driven and risk-based: golden-path stability

(launch → play → quit → relaunch), save/continue integrity, core menus, audio sanity, input handover,

plus side-effect probes suggested by upstream patch notes.

No Steam/Game Pass parity claim is made.

What is regression testing? (with example)

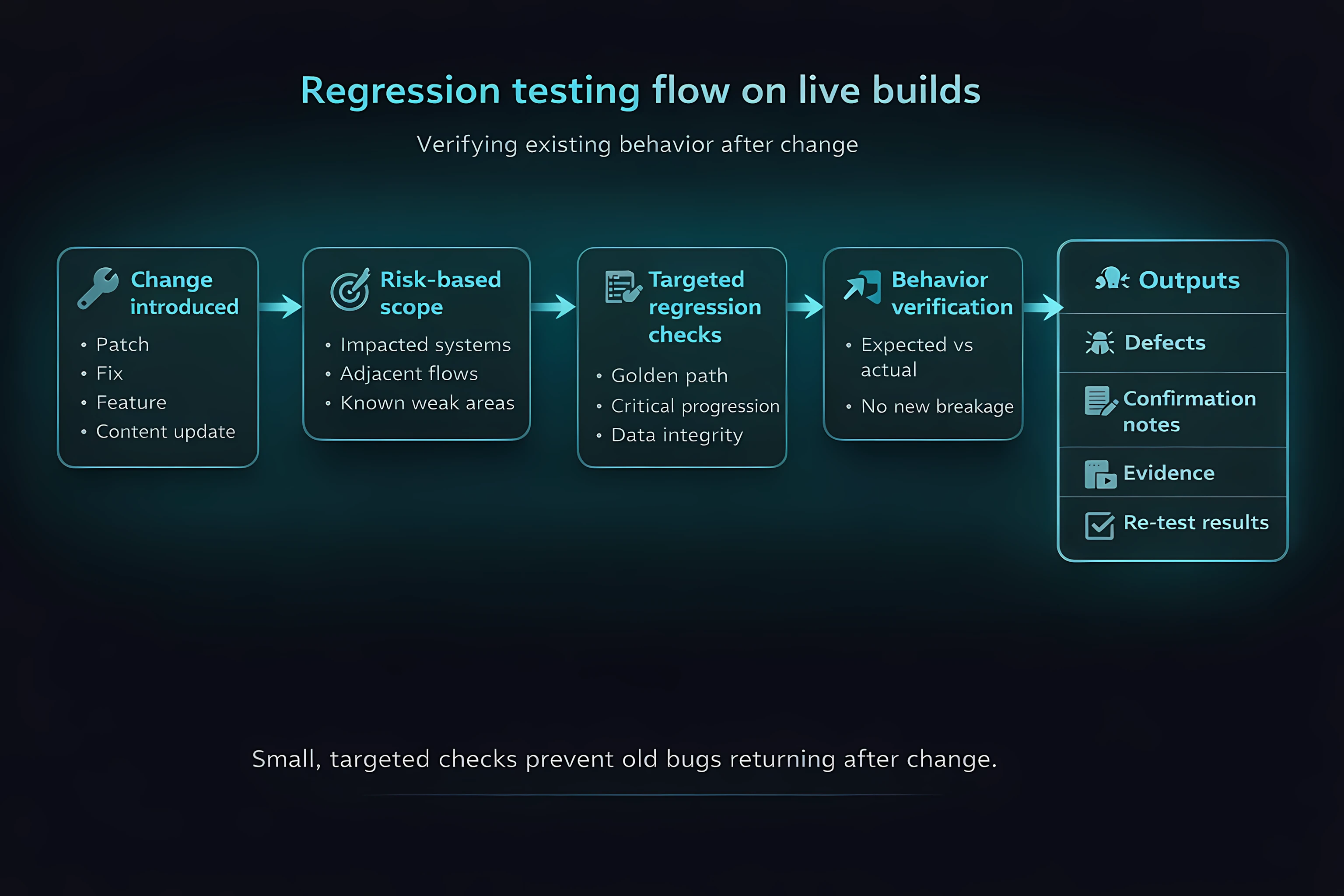

In this regression testing example, regression testing means verifying that existing behaviour still works after a change. In manual QA, this is not about re-testing everything or blindly following a checklist. It is about checking whether previously working systems still hold under new conditions.

A regression testing workflow is selective by design. Coverage is driven by risk: what is most likely to have been impacted, what is most expensive if broken, and what must remain stable for the build to be trusted.

Regression testing results: pass/fail outcomes with evidence

Regression testing produces clear outcomes: pass or fail, backed by evidence and repeatable verification. Not opinions. Not vibes.

Regression testing baseline: golden-path smoke testing checks

I start every regression testing cycle with a repeatable golden-path smoke testing baseline because it prevents wasted time. Smoke testing in regression testing acts as a quick stability check before deeper testing begins. If the baseline is unstable, everything that follows is noise.

In this regression testing example, the baseline line was BL-SMOKE-01: cold launch → main menu → gameplay → quit to desktop → relaunch → main menu. I also include a quick sanity check for audio cutouts during this flow.

Why smoke testing matters in regression testing

The golden path covers the most common player actions (launch, play, quit, resume). If these baseline checks fail, regression testing results become unreliable due to cascading failures that appear unrelated.

Regression testing scope: risk-based testing and change signals

In this regression testing example, I scoped regression testing using a risk-based testing approach driven by change signals. For this project, I used SteamDB patch notes as an external oracle: SWORN 1.0 Patch #3 (v1.0.3.1111), 13 Nov 2025. This does not mean I assumed those changes were present on PC Game Pass.

Instead, I used the patch notes as a change signal to guide regression testing scope and decide where to probe for side effects on the Game Pass build. This approach is useful when working in manual QA without internal access, studio data, or platform-specific changelogs.

Regression testing results: pass vs not applicable (with evidence)

SteamDB notes mention a music cutting out fix, so I ran an audio runtime probe (STEA-103-MUSIC) and verified music continuity across combat, pause/unpause, and a level load (pass). SteamDB also mentions a Dialogue Volume slider. On the Game Pass build that control was not present, so the regression test was recorded as not applicable with evidence of absence (STEA-103-AVOL).

Regression testing matrix example (manual QA)

In this regression testing example, my regression testing matrix is structured to be auditable and easy to review. Each line in the matrix includes a direct check, a side-effect check, a clear outcome, and an evidence link. This keeps regression testing results traceable and prevents “I think it’s fine” reporting.

- Baseline smoke test: BL-SMOKE-01

- Settings persistence check: BL-SET-01

- Save / Continue integrity: BL-SAVE-01

- Post-death flow sanity: BL-DEATH-01

- Audio runtime continuity test: STEA-103-MUSIC

- Audio settings presence check: STEA-103-AVOL

- Codex / UI navigation test: STEA-103-CODEX

- Input handover + hot plug: BL-IO-01

- Alt+Tab behaviour check: BL-ALT-01

- Enhancement spend + ownership persistence: BL-ECON-01

Save/Continue regression testing: repeatable verification using anchors

Save and Continue flows are a common regression testing risk area because failures can appear intermittent. In manual QA, regression testing needs repeatable verification, not assumptions. To reduce ambiguity, I verify using anchors.

In this regression testing example (BL-SAVE-01), I anchored: room splash name (Wirral Forest), health bucket (60/60), weapon type (sword), and the start of objective text. I then verified those anchors after: menu Continue and after a full relaunch. Outcome: pass, anchors matched throughout (session S2).

Why anchors improve regression testing verification

In regression testing, “Continue worked” is not a valid result if it cannot be reproduced. Anchors turn vague outcomes into repeatable verification, making regression testing results consistent and reviewable.

QA evidence for regression testing: what to capture and why

In regression testing, QA evidence matters for passes as much as failures. In manual QA, every result is a claim that needs to be supported by clear test evidence and documentation.

- Video clips: capture input, timing, and outcome together (ideal for regression testing flow and audio checks).

- Screenshots: support UI state, menu presence or absence, and bug report clarity.

- Session timestamps: keep regression testing verification reviewable without scrubbing long recordings.

- Environment notes: document platform, build, input devices, and network state (for reproducible QA testing conditions).

If QA evidence cannot answer what was tested, what happened, and what should have happened, it is not useful test documentation.

Regression testing example: real QA bugs and test results

QA bug example: Defeat overlay blocks the Stats screen (SWOR-6)

Bug: [PC][UI][Flow] Defeat overlay blocks Stats; Continue starts a new run (SWOR-6).

Expectation: after Defeat, pressing Continue reveals the full Stats screen in the foreground and waits for player confirmation.

Actual: Defeat stays in the foreground, Stats renders underneath with a loading icon, then a new run starts automatically.

Outcome: you cannot review Stats.

Repro rate: 3/3, observed during regression testing verification (S2) and reconfirmed in a dedicated re-test (S6).

Regression testing example: music continuity check (STEA-103-MUSIC)

SteamDB notes mention a fix for music cutting out, so I ran STEA-103-MUSIC: 10 minutes runtime with combat transitions, plus pause/unpause and a level load. Outcome: pass, music stayed continuous across those transitions (S3).

Regression testing result: “not applicable” example (STEA-103-AVOL)

SteamDB notes mention a Dialogue Volume slider, but on the Game Pass build the Audio menu only showed Master, Music, and SFX. Outcome: not applicable with evidence of absence (STEA-103-AVOL, S4). This avoids inventing parity and keeps regression testing results accurate.

Accessibility issues logged as a known cluster (no new build to re-test)

On Day 0 (S0), I captured onboarding accessibility issues as a known cluster (B-A11Y-01: SWOR-1, SWOR-2, SWOR-3, SWOR-4). Because there was no newer build during the week, regression testing re-test was not applicable until a new build exists. This is logged explicitly rather than implied.

Regression testing results snapshot (for transparency)

In this regression testing example, the matrix recorded: 8 pass, 1 fail, 1 not applicable, plus 1 known accessibility cluster captured on Day 0 with no newer build available for re-test. Counts are included for context, not as the focus of the article.

Regression testing takeaways: key points from this QA example

- Regression testing is change-driven verification, not “re-test everything”.

- A manual regression testing workflow should start with a repeatable golden-path baseline.

- Risk-based regression testing helps prioritise what to verify after changes.

- External patch notes can be used as a change signal without assuming platform parity.

- Anchors make regression testing verification credible and repeatable.

- “Not applicable” is a valid regression testing result when supported with evidence.

- Pass results require QA evidence too, because they are still claims.

Regression testing FAQ (manual QA example)

What is regression testing in QA?

Regression testing verifies that existing behaviour still works after a change. In manual QA, it focuses on previously working systems, whether or not bugs were ever logged against them.

Is regression testing just re-testing old bugs?

No. Regression testing includes re-checking previously fixed bugs, but its main purpose is to confirm that existing functionality has not been broken by new changes.

Do you need to re-test everything in regression testing?

No. Effective regression testing is selective. A risk-based regression testing approach focuses on areas most likely to be affected by change, rather than testing every feature.

How do you scope regression testing without internal patch notes?

By using external change signals such as public patch notes, previous builds, and observed behaviour as testing oracles, without assuming platform parity.

What is the difference between regression testing and exploratory testing?

Regression testing verifies known behaviour after change. Exploratory testing is used to discover unknown risks and unexpected behaviour. Both are important in a complete QA testing workflow.

Is a “pass” result meaningful in regression testing?

Yes. A pass is still a claim. In regression testing, pass results should be supported with QA evidence to ensure they are credible and repeatable.

When is “not applicable” a valid regression testing result?

When a feature is not present on the build under test and that absence is confirmed with evidence. Logging this explicitly is more accurate than assuming parity or skipping the test silently.

Regression testing example: evidence and case study links

- Regression testing case study (Sworn): full QA artefacts and evidence

- SWORN patch notes (external change signal for regression testing)

This regression testing example focuses on workflow and methodology. The case study includes the full regression testing matrix, session logs, bug reports, and QA evidence used to support test results.

Contact me about QA testing Connect with a Game QA tester on LinkedIn View more QA testing articles