Back to Articles Hub Homepage Game QA Portfolio Hub About Me QA Chronicles

Game QA Testing Strategy: Why Testing Everything Is a Waste of Time

An applied game QA testing article, not a definition page and not a case study.

This article is based on a short game QA testing pass on an early build of The Chef’s Shift (PC, v0.1.2a). It shows how I approach risk-based testing, prioritise the core gameplay loop, and avoid wasting time on low-value test coverage. The goal is simple: find what breaks first, and focus testing effort where it actually matters.

TL;DR

- Point: in game QA testing, early build passes should be selective, not exhaustive.

- Example context: The Chef’s Shift on PC, tested across 8 sessions in a 2-day pass.

- Main finding: a repeat-loop test exposed a core gameplay loop blocker quickly (CHEF-1).

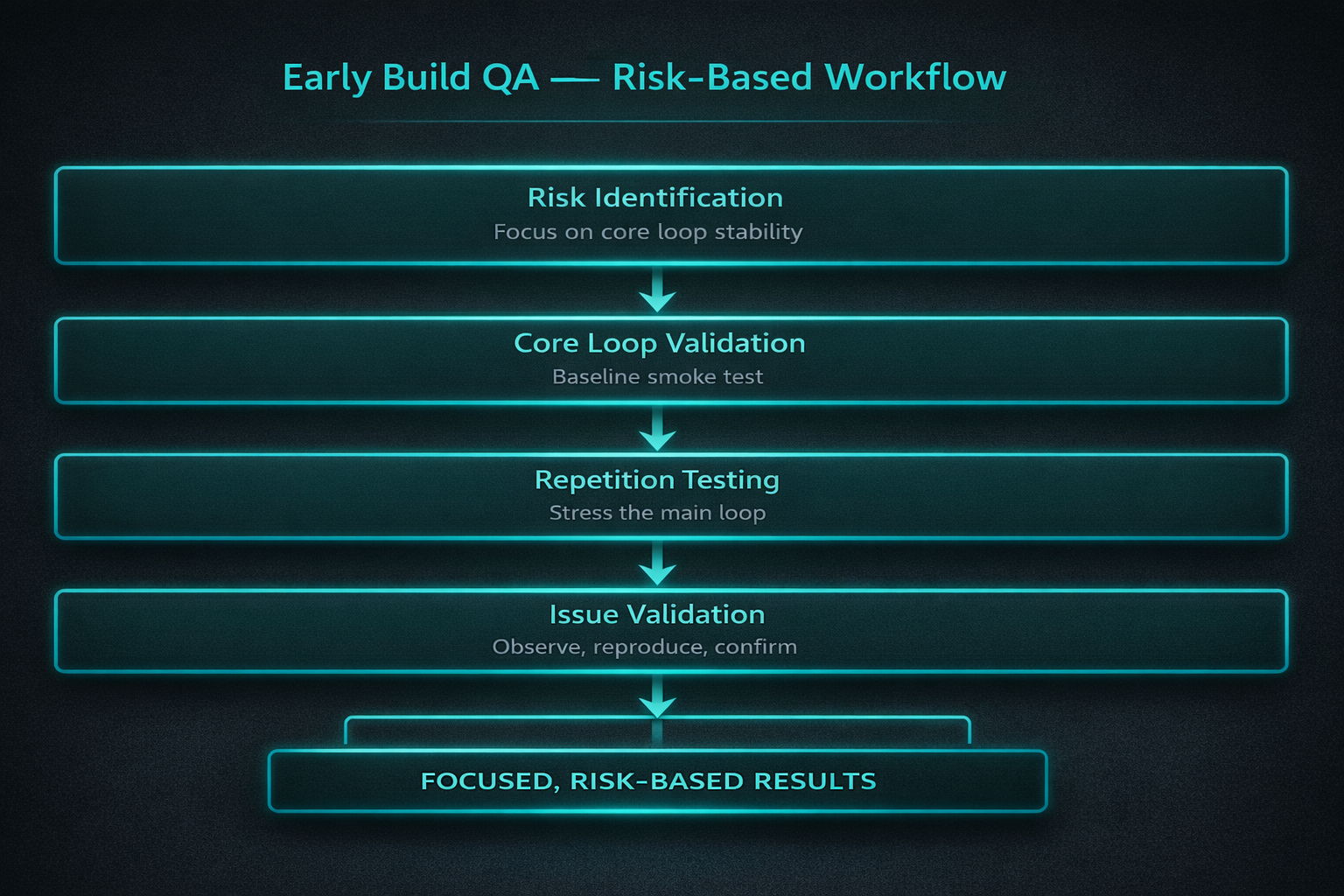

- Method: risk-based testing - baseline first, repetition second, then targeted follow-up on the pressure points that mattered.

- Takeaway: the best early build tests are the ones most likely to break progression, not the ones that make your spreadsheet look busy.

Game QA testing scope: what I tested and why

This article is grounded in a self-directed game QA testing pass on an early build of The Chef’s Shift using the

PC build v0.1.2a, run as a 2-day focused pass.

Scope was deliberately narrow using a risk-based testing approach: core gameplay loop stability, input handling, serving flow, recovery/progression, and

accessibility-related pressure points. The purpose was not “full coverage”, but to find the failures most likely

to disrupt progression or confuse a first-time player.

What “testing everything” gets wrong in game QA testing

In early builds, the illusion that “test everything” is the safest approach, but it usually means the opposite. It spreads effort too thin, hides priorities, and wastes time on checks that do not matter yet.

Early build QA is not about proving that every possible interaction has been covered. It is about finding the problems most likely to break progression, confuse players, or expose unstable systems before the build gives you a false sense of security.

Early build QA output: signal, not volume

A good early QA testing pass does not produce the biggest checklist. It produces the clearest answer to: what breaks first, and how badly does it matter?

Core principle

The goal is not coverage. The goal is useful coverage.

Why the core gameplay loop comes first in game QA testing

In game QA testing, the first thing I want to know in an early build is whether the game can sustain its own basic loop. If the main player cycle is unstable, everything layered on top of it is secondary.

For The Chef’s Shift, that meant focusing on: prepare → tray → serve → collect → repeat. If that core gameplay loop holds, I can push further. If it breaks, that is the real story of the build.

Core loop priority

In early builds, the most valuable QA testing checks are the ones that stress the core gameplay loop, not the ones that make coverage look wide.

Why this matters

Players do not experience a build as a spreadsheet. They experience its loop. If the loop is unstable, that is the problem worth finding first.

Why I start with a clean baseline in game QA testing

In game QA testing, before stressing anything, I want one clean run. Not because a single pass proves stability, but because it tells me whether the core gameplay loop works at all in isolation.

In this project, TM-01 established that one full loop could complete cleanly. That mattered because it gave me a usable baseline: the game was not completely broken, so later failures could be tied to pressure, repetition, or interaction between systems.

Baseline does not equal confidence

A clean first run is not the result. It is just permission to move to the QA testing checks that matter more.

Why repetition matters more than variety in game QA testing

In game QA testing, one of the fastest ways to expose instability in an early build is not “more features”. It is more loops.

Repetition is where systems start interacting with their own state, where timing starts to matter, and where apparently stable flows begin to break down.

In The Chef’s Shift, the blocking issue did not show up in the clean baseline. It appeared when the core gameplay loop was repeated under pressure. Payment input was accepted, but behaviour became inconsistent, which blocked progression entirely (CHEF-1).

Repetition exposes real risk

Variety can make a test plan look rich. Repetition is what tells you whether the game can survive its own design under repeated QA testing conditions.

How I separate signal from noise in early build QA

In game QA testing, a short early build pass should not try to answer every question. It should answer the questions most likely to change what you test next.

- Does the core gameplay loop work once?

- Does it keep working when repeated?

- Can invalid actions be rejected safely?

- Can the game recover cleanly after a transition or failure state?

- Do readability, prompts, and timing create player-facing friction?

Anything outside that is noise unless the build gives me a reason to follow it.

Scoping principle

Good QA testing scoping is not about testing less. It is about testing the things that change your understanding of the build fastest.

What I deliberately do NOT test first in early builds

This is where prioritisation usually breaks down in early game QA testing.

1. Edge cases and rare scenarios

I do not start with:

- Extreme input combinations

- Long-session endurance

- Unusual player behaviour

In The Chef’s Shift, I focused on repeatable core loop execution, not edge-case exploration. That decision paid off immediately. CHEF-1 was found through repetition (3/3 repro), not through obscure or unusual scenarios.

If the main loop fails consistently, edge cases do not matter yet. You are testing the wrong problem.

2. Cosmetic and visual polish

I deprioritise:

- Minor animation issues

- Visual polish

- Small UI misalignment

The nuance that is often missed is that visual issues become priority if they affect gameplay clarity.

In The Chef’s Shift, CHEF-2 showed this clearly. The oven interaction prompt was difficult to see against the background under pressure. Technically visual, but in practice it caused:

- Missed interactions

- Delayed service

- Player confusion under pressure

That moved it from “cosmetic” to a gameplay-impacting UI issue.

3. Full feature coverage

I do not try to test everything equally in an early build.

I deliberately avoided:

- Deep coverage of non-core behaviours

- Exhaustive system permutations

- Broad exploratory passes across all features

Instead, I used charter-driven sessions (S1 to S8) focused on loop stability, input, and system interaction.

The result was 8 structured sessions, 2 meaningful issues, and clear signal without noise. That is more valuable than 20 shallow findings nobody cares about.

4. Full accessibility audits

I include accessibility as a lens, not a checklist.

In The Chef’s Shift, I applied APX-informed thinking to:

- Typing under pressure

- Readability and recognition speed

- Multitasking and cognitive load

- Prompt visibility and clarity

This revealed a key shift in performance. At low complexity, performance was limited by typing accuracy. At higher complexity, it shifted to cognitive load and task management.

That distinction matters because not all difficulty is a bug. Some issues are player-facing friction, not system failure.

Instead of running a full audit, I focused on where accessibility directly affected core gameplay performance.

Broken systems vs player-facing friction in game QA testing

In game QA testing, one reason exhaustive testing is a waste early on is that it often treats every failure as the same kind of problem. They are not.

In this pass, I had to separate two very different things:

- System failure: payment behaviour became inconsistent and blocked the core gameplay loop (CHEF-1).

- Player-facing friction: a prompt was difficult to see under pressure, leading to missed interaction and delayed service (CHEF-2).

Both matter, but they do not mean the same thing. One says the system is unstable. The other says the player is being poorly informed.

This is also where the accessibility lens helped. At low complexity, performance was limited mostly by typing accuracy. At higher complexity, the limiting factor shifted toward cognitive load, situational awareness, and task management.

Not every hard moment is a bug

QA testing gets stronger when it can tell the difference between a system breaking and a player being overloaded or poorly guided.

What The Chef’s Shift proved in practice for game QA testing

Example 1: a baseline pass is useful, but not enough

In this game QA testing pass, TM-01 passed cleanly. One full core gameplay loop completed with stable input, serving, and collection flow. Useful, but not meaningful on its own.

Example 2: repeated loop testing exposed the real blocker

TM-02 attempted repeated core gameplay loop execution and hit the key defect: payment input was accepted, but behaviour became inconsistent under active multi-customer conditions. This blocked progression and immediately changed the direction of the QA testing pass.

Example 3: stable systems were worth proving too

Wrong-customer serving was correctly rejected. Retry and Continue reset or progressed state cleanly. Mistype handling remained consistent. Order association stayed clear under pressure. These were not flashy findings, but they made the overall QA testing pass more credible.

Example 4: accessibility pressure points changed the interpretation

Higher complexity did not break the system mechanically, but it changed the player burden. Slower typing improved accuracy, but reduced awareness of concurrent events. Readability and prompt visibility problems then became more significant because they affected decision speed under pressure.

Game QA testing takeaways for early builds

- In game QA testing, early build passes should be selective. Wide coverage without priorities is usually wasted effort.

- The core gameplay loop comes first because it tells you whether the build can sustain its own design.

- A clean baseline is useful, but repetition is where unstable systems usually show themselves.

- Fast, focused QA testing checks are more valuable than impressive-looking coverage if they change what you do next.

- Not every painful player moment is a bug. Some are accessibility, readability, or cognitive load problems.

- Stable systems deserve evidence too, because proving what held up strengthens the credibility of what broke.

Game QA testing FAQ (early builds)

Shouldn’t game QA testing try to cover as much as possible?

No. In game QA testing, early builds need prioritisation, not maximum breadth. The goal is to find what breaks progression or trust fastest.

Is one clean run enough to call a system stable in QA testing?

No. A clean run is a baseline, not proof. Repetition and pressure are what test stability in a QA testing pass.

Why not start with edge cases in early build QA testing?

Because edge cases matter less if the core gameplay loop cannot survive normal use. Early QA testing should protect the core experience first.

What counts as a useful early build bug in game QA testing?

Anything that blocks progression, corrupts state, breaks core interaction, or reliably misleads the player during normal use.

Are accessibility-related issues worth logging in an early QA testing pass?

Yes, if they create real player-facing friction or change decision-making under pressure. They are not “extra” if they affect progression.

How do you know when to stop testing one issue and move on in QA testing?

When the behaviour is clear, reproducible enough to explain, and supported by evidence. Beyond that, time is usually better spent on the next risk.

Game QA testing evidence and case study links

- The Chef’s Shift game QA testing case study (full artefacts and evidence)

- Game QA testing articles hub

This page stays focused on game QA testing workflow and judgement in early builds. The case study links out to the workbook tabs, session logs, bug log, Jira-style tickets, and evidence clips.

Contact me about game QA testing Connect on LinkedIn Browse more game QA testing articles